Powerloom DSV Mainnet Is Live: BDS, Verified Data, and the Agentic Data Economy

TL;DR:

Powerloom’s Decentralized Sequencer-Validator network is now live on mainnet for the Blockchain Data Services datamarket. BDS is serving verified Uniswap V3 data in production, supported by 3,000+ daily active Snapshotter Lite slots, six stable validator nodes, and more than 500,000 finalized snapshot batches committed on-chain.

This marks a major shift for Powerloom: decentralization is no longer just a protocol goal. It is how the network is operating today. With BDS live, $POWER is now connected to a working datamarket economy spanning snapshotters, validators, verifiable data demand, and the next phase of agent-first consumption.

We have spent the last few years building toward a simple but ambitious goal: useful datamarkets that do not depend on a single company, a single backend, or a single privileged sequencer.

- 3000+ daily active Snapshotter Lite slots

- 6 stable, long-running Validator nodes

- over 500k+ finalized snapshot batches committed onchain with 99% completion rate

- Foundation operated Resolver node serving structured Uniswap V3 data on top of the datamarket, with cryptographic provenance

- Agentic datamarket economy, with $POWER as the currency of consumptive utility, powered by the headless CLI as well as OpenClaw integration

Decentralization is no longer only an architectural direction for us; it is how the network is operating.

The preparation and build-up

Staking, validator consensus, agentic demand for accurate, verified data, and protocol-native incentives now reinforce the positive feedback cycles of utility of $POWER within the datamarket economy that we have always envisioned.

In our last couple of blogs, we had shared the larger scope of the protocol's direction with regard to decentralization of sequencing and validation and launch of the agentic, datamarket economy with BDS preview.

Since then, we went live with the DSV Devnet followed by DSV Mainnet Alpha for validators who had expressed their interest in joining the Validator program. This helped us iron out major operational issues.

We ran tests and simulations at mainnet scale for almost two months between the initiatives noted above to fine tune networking parameters, onchain protocol state configurations, fix concurrency and deadlock issues, all the while without compromising on the open, decentralized and permissionless nature of the consensus and aggregation layer.

While we were prepping the DSV mesh with an observed rate of 99%+ finalization among the participating validators in the test networks, we also

- offered the first Ecosystem grant to BazaarX

- released Snapshotter Loyalty Rewards

- onboarded and prepared Snapshotter Lite nodes for the BDS datamarket on DSV Mainnet, well in advance with detailed instructions

And finally on March 3 2026, we went live with the first epoch for the BDS datamarket.

The first epoch for the BDS data market is scheduled for release tomorrow (March 3) at 6:30 AM UTC.

— Powerloom Protocol (@Powerloom) March 2, 2026

Please ensure your nodes are online and that the mint dashboard shows recent simulation data. Setup and more information in 🧵👇 https://t.co/N4LF3NNS13

Why this matters for $POWER

BDS gives $POWER a direct role in a working datamarket economy.

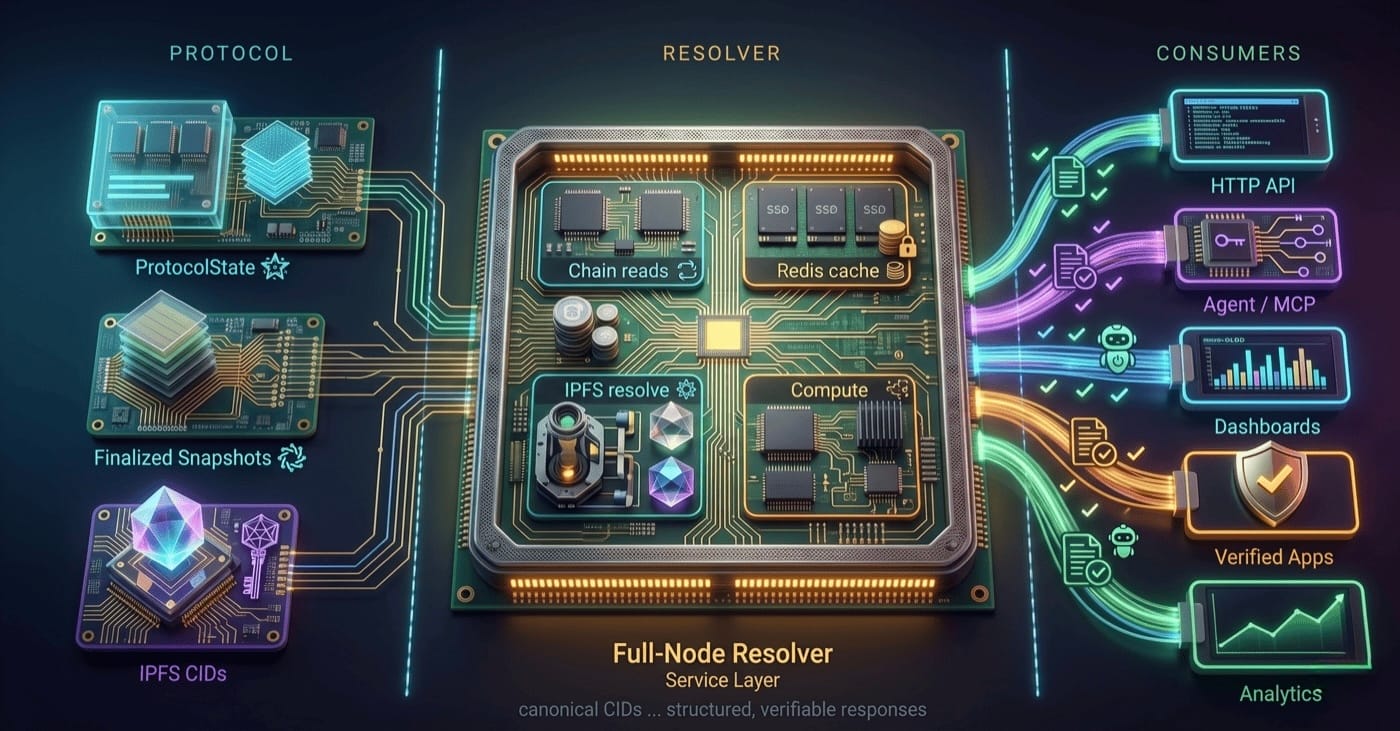

Snapshotters produce data ➡ validators finalize it ➡ resolvers serve it ➡consumers access verified outputs. As agent-first consumption expands through OpenClaw, MCP, and bds-agent-py, $POWER becomes tied to data usage, not only network participation.

This is the feedback loop Powerloom has been building toward:

- useful datamarkets create demand for verified outputs;

- verified outputs require decentralized production and validation; and

- protocol-native incentives coordinate the participants who make that possible.

DSV Network: Evolution of the Consensus and Aggregation Layer of Powerloom Protocol

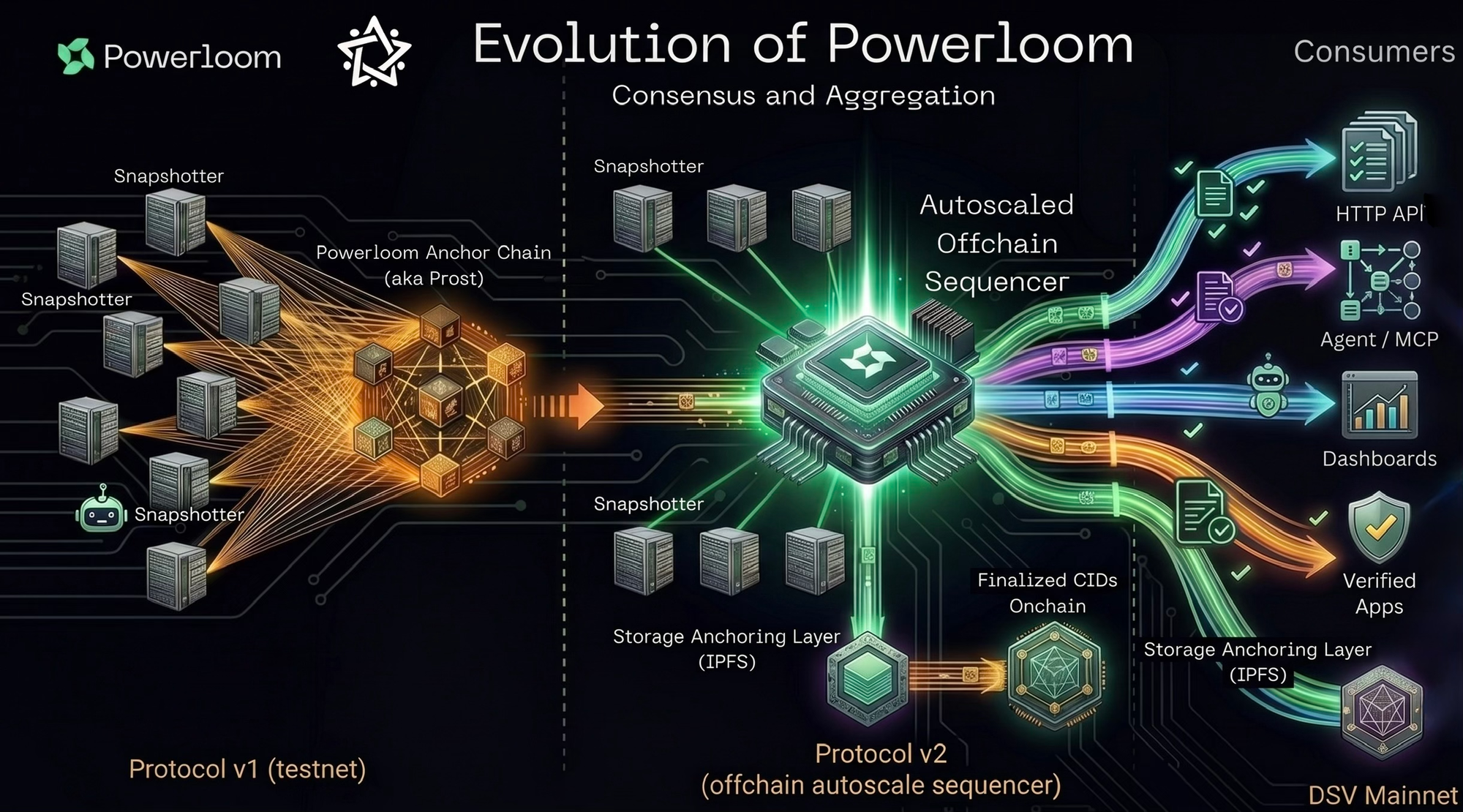

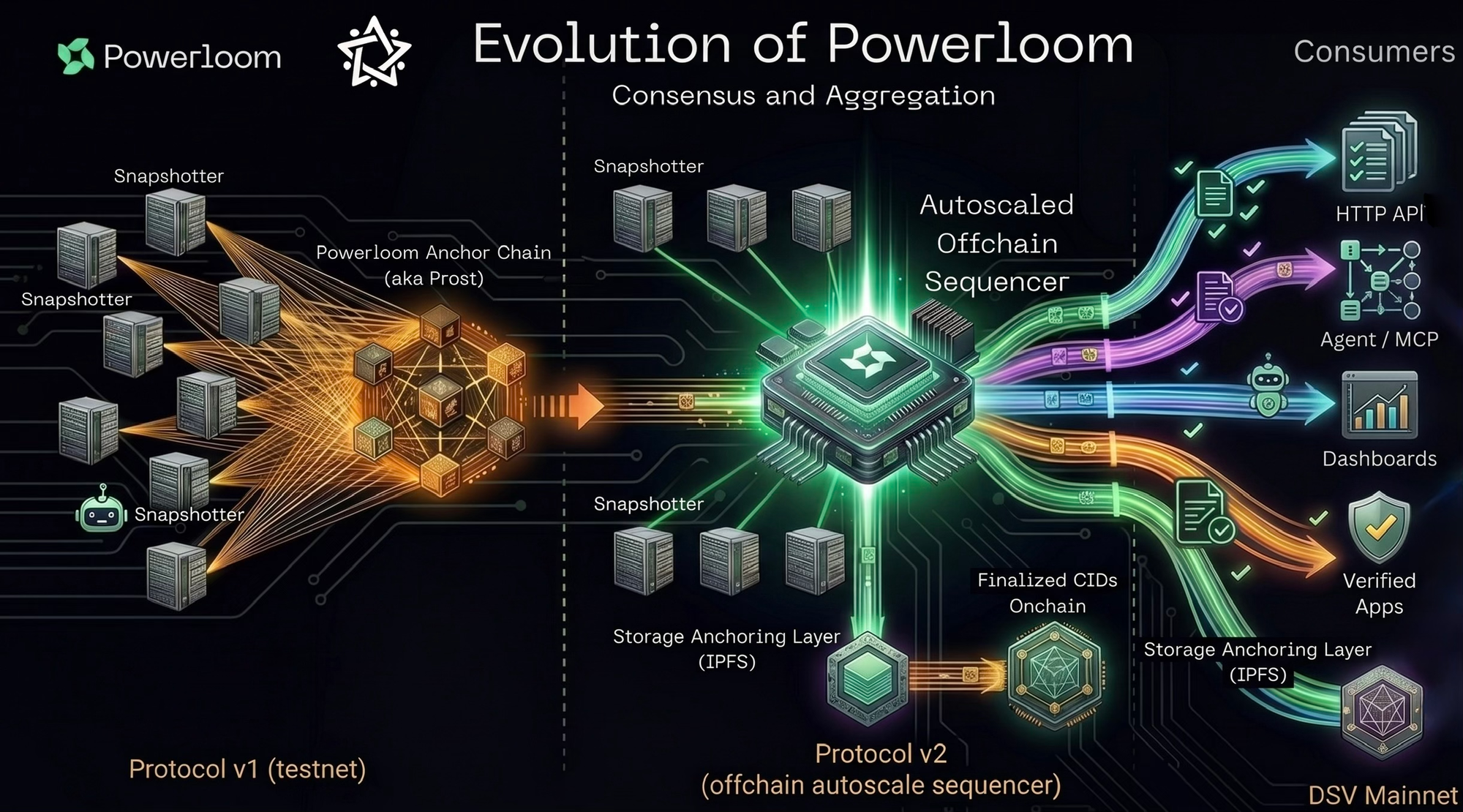

To recap, our protocol journey has gone through three distinct stages.

- Protocol v1: snapshotter nodes could compute useful data and anchor it on-chain. But every snapshot submission required its own on-chain transaction. That made the system expensive, slow, and unsuitable for high-frequency datamarkets.

- Protocol v2 was the performance breakthrough. It introduced a high-throughput sequencer that batched snapshots, uploaded payloads to IPFS, and anchored finalized batch CIDs on-chain through `submitSubmissionBatch()`. That removed the per-snapshot transaction bottleneck and made practical datamarkets possible.

But it still left one hard problem: the sequencer was centralized. Powerloom Foundation had to operate it, maintain it, and make deep configuration changes if the network ever needed to support a new market or modify dynamic operational parameters like load balancing on the p2p listening interface or change reward update batch sizes among other things.

DSV Mainnet is the decentralization of that high-throughput sequencer architecture. Multiple validators now form a peer-to-peer mesh using libp2p and gossipsub. Snapshot submissions propagate across that mesh, are aggregated in two stages, and reach validator consensus before a finalized batch is anchored on-chain.

DSV keeps the performance gains of Protocol v2 while moving the sequencer responsibility out of a monolithic Foundation-operated service and into a validator network. This allows for a free market economy to flourish and introduce the roles of curators and signaler peers who act as high fidelity filters in the creation of and call for participation in useful datamarkets.

First 30 Days of DSV Mainnet Operations

The BDS mainnet market processes one-block epochs on Ethereum mainnet. Every Ethereum block is a new opportunity for snapshotter nodes to compute data, for validators to aggregate it, and for the finalized output to become available to downstream consumers.

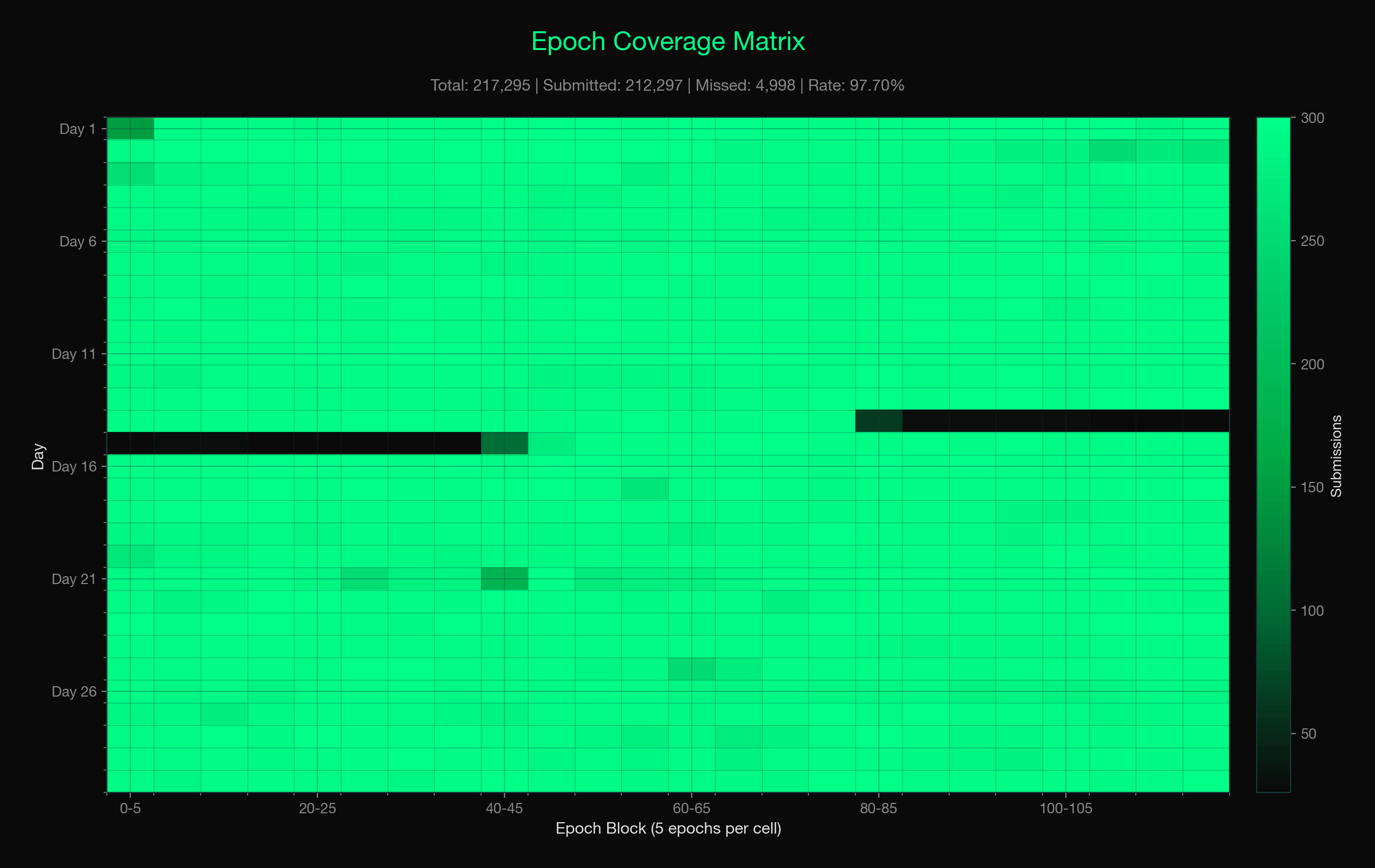

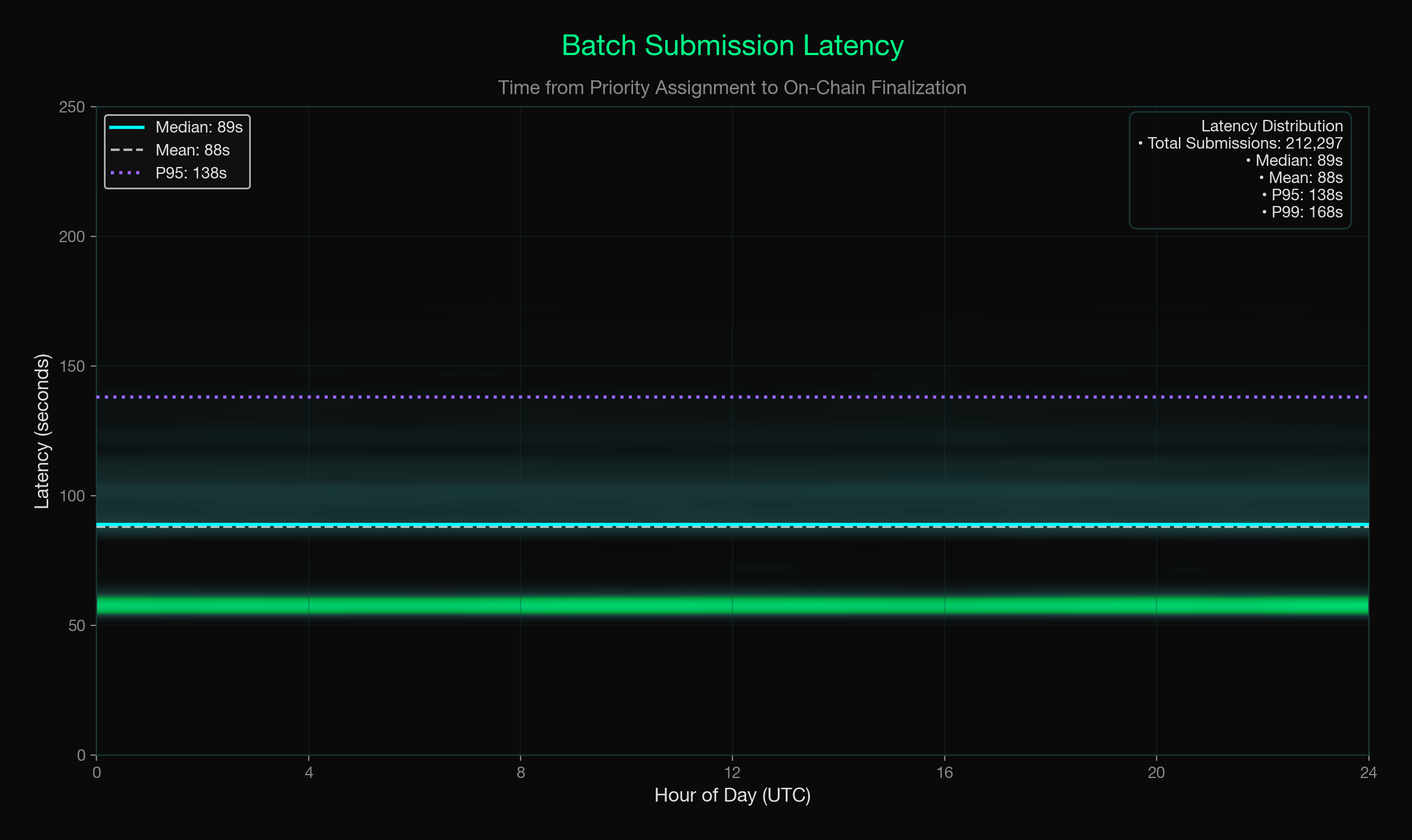

Across 217,295 total epochs assigned for batch submission, the validator layer submitted 212,297 batches and completed 210,200 of them — That represents a 97.7% submission rate across assigned epochs and a 99.01% completion rate once a batch was submitted. 26 of 30 days ran at the full steady-state cadence of 7,200 epochs per day. The batch submission pipeline ran with a median end-to-end latency of 89 seconds from priority assignment to on-chain finalization.

Sustained behavior is the signal here: the network has stayed online, processed live demand, and produced verifiable outputs across mainnet operation.

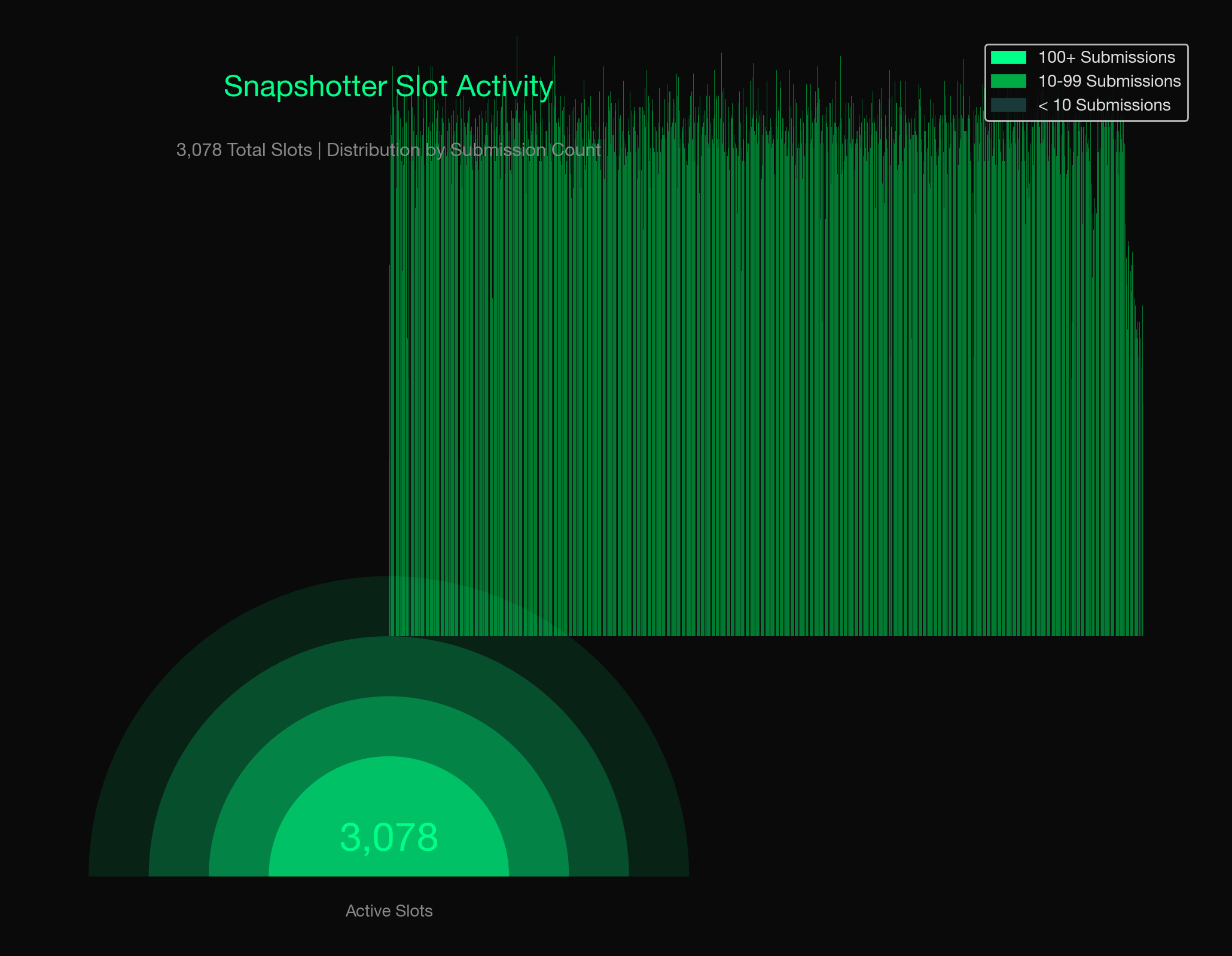

On the snapshotter side, a separate measurement of 1,811 epochs taken April 17–24 shows between 2,970 and 3,047 unique snapshotter lite nodes eligible for rewards each day — a number that grew through the week as the network expanded. Per epoch, the median eligible node count was 305, with between 3 and 6 validators providing independent batch attestations. Every measured epoch had at least 3 validators, the minimum required for consensus finalization.

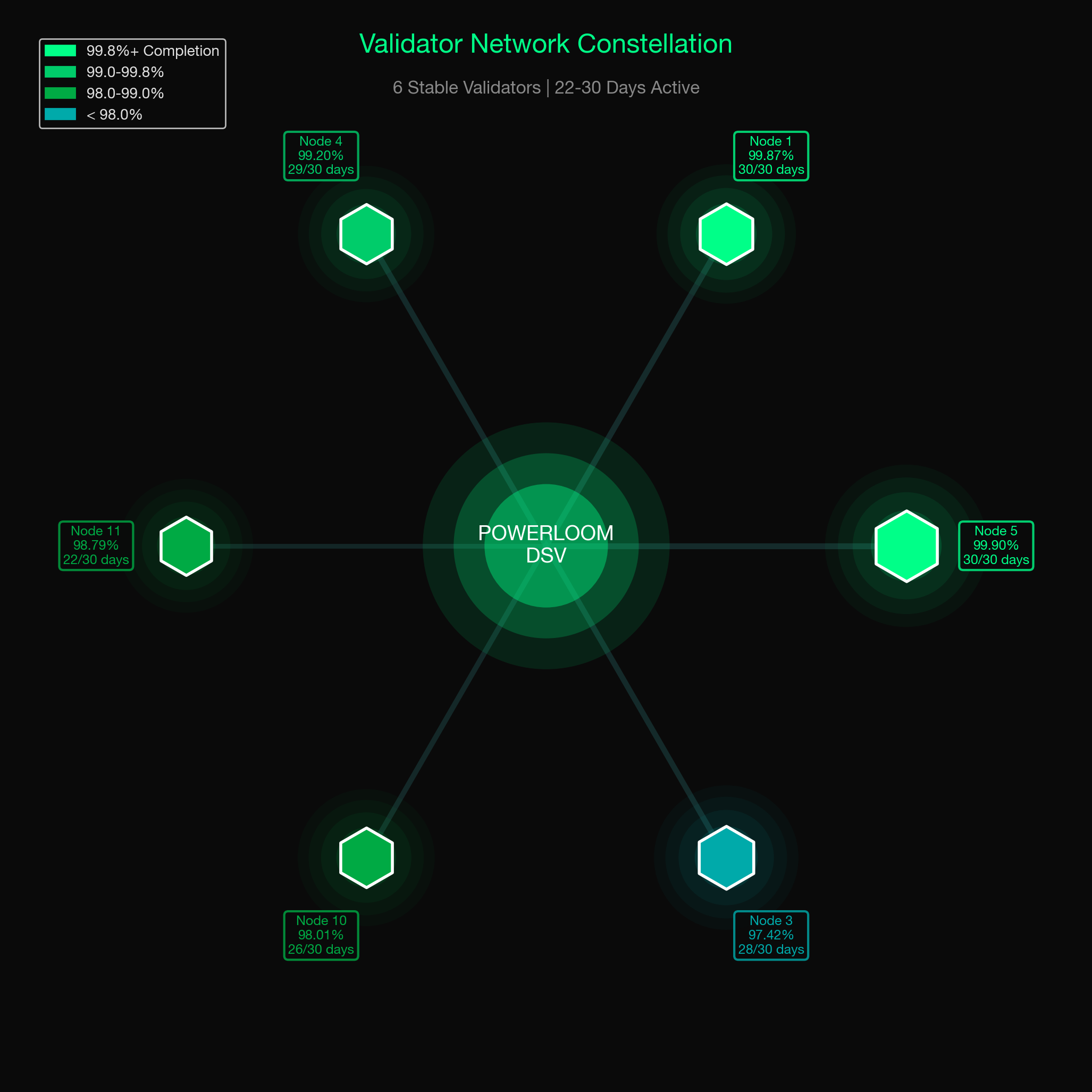

Validator reliability tells the clearest story. Of the validator nodes that operated during this period, 6 ran for 22 or more consecutive days.

Two — Node IDs 1 and 5 — ran the full 30-day window with 100% uptime and 99.87% and 99.90% completion rates respectively. Across the six stable long-running nodes, the aggregate completion rate was 98.95% over 193,475 total submissions.

The six stable long-running validators showed the following reliability profile:

| Operator node | Active days | Completion rate |

|---|---|---|

| Node 1 | 30/30 (100%) | 99.87% |

| Node 5 | 30/30 (100%) | 99.90% |

| Node 4 | 29/30 (96.7%) | 99.20% |

| Node 3 | 28/30 (93.3%) | 97.42% |

| Node 10 | 26/30 (86.7%) | 98.01% |

| Node 11 | 22/30 (73.3%) | 98.79% |

Three additional transient nodes participated for shorter periods during operator testing and rotation.

The 2.3% of epochs with no batch submission were caused primarily by epoch broadcast failures during mesh stabilization. These were infrastructure and network-density issues rather than failures of the consensus design itself.

Apart from that, once a validator is selected by VPA to submitting a batch for an epoch, it completes 99.01% of the time.

The network's consensus and completion behavior is already operating at production quality. The remaining coverage gaps come from mesh density: more validators with better-connected bootstrap peers means epoch coverage approaches 100%. Near-term infrastructure work is focused exactly there.

All figures are derived from on-chain events on Powerloom mainnet (chain 7869) and are fully reproducible. Contract addresses and methodology: docs.powerloom.io/dsv-mainnet/stability-and-scale.

BDS: The First Datamarket on DSV Mainnet

The first market covers Uniswap V3 on Ethereum mainnet —

- trade snapshots

- pool state

- token volumes

- liquidity distributions,

- and streaming trades.

These are not simply database rows served from a hosted backend. They are outputs of a decentralized data pipeline: computed by snapshotter nodes, finalized through DSV, resolved through the BDS service layer, and exposed to applications, analysts, and agents.

BDS metered API served by resolvers or full nodes offer structured, verifiable data across agents, applications, dashboards, and monitoring systems. Every response includes a verification object:

{

"verification": {

"cid": "bafybeif...",

"epochId": 24785718,

"projectId": "allTradesSnapshot:0x26c44e5CcEB7Fe69Cffc933838CF40286b2dc01a:mainnet-BDS_MAINNET_UNISWAPV3-ETH"

}

}

Any consumer can independently verify the returned CID against the ProtocolState contract on the Powerloom anchor chain:

cast call \

0x1d0e010Ff11b781CA1dE34BD25a0037203e25E2a \

"maxSnapshotsCid(address,string,uint256)(string,uint8)" \

0x26c44e5CcEB7Fe69Cffc933838CF40286b2dc01a \

"allTradesSnapshot:0x26c44e5CcEB7Fe69Cffc933838CF40286b2dc01a:mainnet-BDS_MAINNET_UNISWAPV3-ETH" \

24785718 \

--rpc-url https://rpc-v2.powerloom.network

If the CID matches, the data was finalized by DSV consensus. Consumers do not have to trust the server alone; they can verify the provenance of the data against the protocol.

The full endpoint catalog, verification patterns, and the snapshotter full-node architecture are documented at Powerloom docs.

The following series of releases will expand the consumptive side of the datamarket agentic economy: agent-first consumption through OpenClaw, MCP, and bds-agent-py. That is where BDS stops being only an API and becomes a programmable data layer where autonomous agents can discover, configure, pay for, and verify data.

What's Next For Consumers

In our next post, we'll cover what's already built on top of all of this today:

- Agent-first access to BDS data through OpenClaw

- Headless agent CLI

bds-agent-pyand framework-neutral agent onboarding - MCP support

What's Next for the DSV Network

- Mesh strengthening

- Signaller and curator peers

- Data market tiering

Documentation

The full architecture, protocol workflow, on-chain contracts, and operational signals are now documented:

- DSV Mainnet — architecture, roles, protocol workflow, verification, incentives, stability

- BDS Datamarket — what BDS is, the snapshotter resolver, endpoint catalog, verification pattern

- Agents & BDS — agent consumption paths (coming in the next post)

Near-term work for DSV network

Mesh strengthening. The 2.3% epoch miss rate is primarily a bootstrap and coverage problem. Increasing validator mesh density and improving bootstrap peer infrastructure should reduce the impact of node restarts and brief disconnects.

Signaler and curator peers. Signallers and curators are the next step toward permissionless data market creation. Signallers stake $POWER to vote on data market prioritization, while curators assess the quality and relevance of proposed markets. With DSV and BDS proving that the execution layer works, these roles can move more of the network’s market-selection process away from the Foundation and toward protocol participants.

Datamarket tiering. Not all data is the same, and not all consumers need the same thing. The architecture ahead accommodates two distinct modes:

-

High-frequency, fast datamarkets — optimized for latency, suited for close-to-the-edge trading applications. These markets prioritize speed and accept a narrower validator set for faster finalization. Think sub-minute snapshots of DEX trade flows or mempool-adjacent data.

-

Medium-to-slow, consensus-first datamarkets — optimized for verifiability and redundancy. These markets run more validators, require broader snapshotter consensus before finalization, and accept slightly higher latency in exchange for provably accurate data. Suited for risk systems, compliance use cases, and anything where accuracy matters more than milliseconds.

In Conclusion

DSV proves that Powerloom’s high-throughput data market architecture can operate without a single privileged sequencer.

BDS proves that the architecture can serve real, verifiable data in production.

The next step is to make this economy increasingly self-directed: more validators, stronger mesh coverage, signaller and curator peers, tiered data markets, and agent-first consumption paths that allow autonomous systems to discover, pay for, and verify data directly.

That is the direction Powerloom has been building toward from the beginning: useful, verifiable data markets coordinated by protocol participants rather than a centralized backend..

Spread the word. Make sure you're in our community (and don't miss exciting updates): Discord | X | Telegram | GitHub | Website | LinkedIn